Take a look!

INTERNET OF SURFACES: Photo Examples of Screens of All Sizes

More pictures will be added.

Focused on interactive multimedia and emerging technologies to enhance the lives of people as they collaborate, create, learn, work, and play.

Aug 30, 2008

Aug 28, 2008

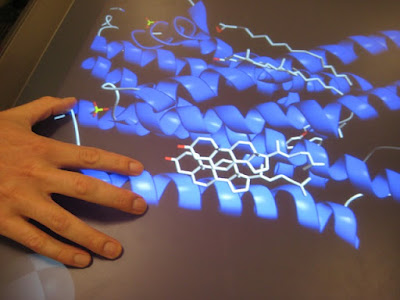

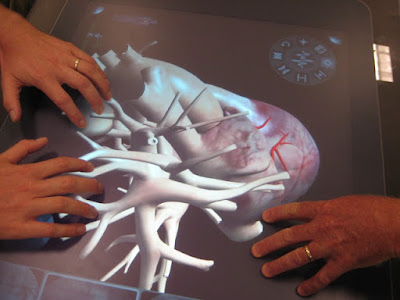

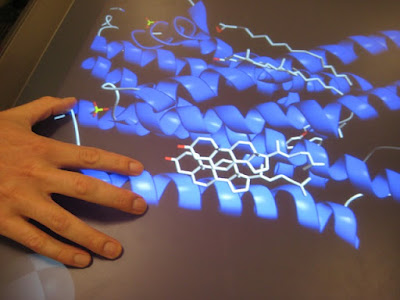

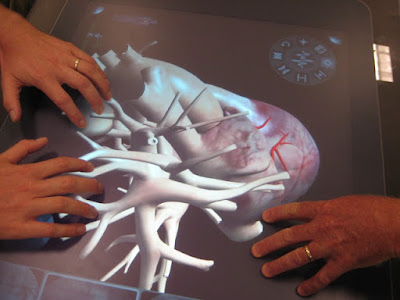

Surface Computing, Health, and Hands-on Science Education

"....from the first time I saw surface in Andy Wilson's lab at Microsoft Research, I knew it had healthcare written all over it. It has taken some time to bring together the right developers and partners to apply Surface technology in health, but we are finally there." -Bill Crounse, MD, Microsoft HealthBlog

As I've previously suggested, surface computing would be useful in education K-12 settings. One look at the graphics posted below at a demonstration about Microsoft Surface in Health.

We know that one of the challenges in our public schools is to to encourage more students to take STEM-related courses. (If you are not familiar with the acronym, STEM stands for Science, Technology, Engineering, and Mathematics.) One look at the hands-on graphics below might convince reluctant students to sign up for class!

The video of the 3D interactive heart simulation can be found at the bottom of the following post:

Microsoft Health Blog

As I've previously suggested, surface computing would be useful in education K-12 settings. One look at the graphics posted below at a demonstration about Microsoft Surface in Health.

We know that one of the challenges in our public schools is to to encourage more students to take STEM-related courses. (If you are not familiar with the acronym, STEM stands for Science, Technology, Engineering, and Mathematics.) One look at the hands-on graphics below might convince reluctant students to sign up for class!

The video of the 3D interactive heart simulation can be found at the bottom of the following post:

Microsoft Health Blog

Posted by

Lynn Marentette

Aug 27, 2008

Digital Lighbox for Hospitals - The Multi-touch Future of Electronic Medical Records?

Is this what the future holds for electronic medical records?

I came across this on Richard Bank's blog, rb.trends. This multi-touch display is from BrainLAB AG, a company located in Germany. Here is a quote from Ubergizmo:

"Digital Lightbox replaces the conventional light box used to observe analog x-ray images. Connected to the hospital PACS, the new digital platform can be installed both in meeting rooms and in operating rooms, where clinicians can then access, manipulate, and utilize data for surgery planning. By displaying the human body in 3D, Digital Lightbox helps clinicians to more clearly demonstrate to patients what effects a disease can have and which procedures may be necessary. Digital Lightbox enables clinicians to select the most valuable images from large amounts of existing medical data. Ergonomic touchscreen technology with zoom functionality makes working with data easy and effective. Clinicians can intuitively navigate within pictures and between settings. Image scrolling can be performed with one finger; zooming in and out of images with two. Images from different sources can also be fused easily. A measure functionality enables clinicians to set size and other dimensions."

Something like this would be good for high school science classrooms.

Update:

For more photos of the Digital Ligthbox and the iPlan Net software that supports remote collaboration, visit the Future-Making Serious Games blog.

I came across this on Richard Bank's blog, rb.trends. This multi-touch display is from BrainLAB AG, a company located in Germany. Here is a quote from Ubergizmo:

"Digital Lightbox replaces the conventional light box used to observe analog x-ray images. Connected to the hospital PACS, the new digital platform can be installed both in meeting rooms and in operating rooms, where clinicians can then access, manipulate, and utilize data for surgery planning. By displaying the human body in 3D, Digital Lightbox helps clinicians to more clearly demonstrate to patients what effects a disease can have and which procedures may be necessary. Digital Lightbox enables clinicians to select the most valuable images from large amounts of existing medical data. Ergonomic touchscreen technology with zoom functionality makes working with data easy and effective. Clinicians can intuitively navigate within pictures and between settings. Image scrolling can be performed with one finger; zooming in and out of images with two. Images from different sources can also be fused easily. A measure functionality enables clinicians to set size and other dimensions."

Something like this would be good for high school science classrooms.

Update:

For more photos of the Digital Ligthbox and the iPlan Net software that supports remote collaboration, visit the Future-Making Serious Games blog.

Posted by

Lynn Marentette

Aug 23, 2008

Digital Students@Analog School videoclip from 2004: Do the sentiments of the students still ring true?

It is the beginning of the school year, the best time of the year to for educators to seriously reflect on the many ways they can play an important role in engaging and inspiring their students.

My hunch is that many educators still do not feel comfortable keeping up with world of the tech-savvy. To do so takes quite a bit of effort, time, and determination. And frustration. If you've worked in public schools for a while, you know what I mean. Much of the technology that educators have been handed over the years has been teacher-unfriendly.

.

Things are changing.

It has been six years since CAST and the Association for Supervision and Curriculum Development created an on-line "how-to" book about technology and the concept of Universal Design for Learning (UDL). This online multimedia book, Teaching Every Student in the Digital Age, still provides a good foundation for teachers who plan to integrate technology into teaching and learning activities to support all learners.

It is exciting to know that many school districts have initiated study groups provide on-line resources for their teachers to support the implementation of UDL. . At the university level, the concept of using technology to support universal design for instruction is not as alien as it might have been 10 years ago.

Mindful, reflective use of technology, including interactive multimedia technology, can support multiple means of learning, communication, collaboration, and knowledge sharing among among all learners, no matter what age. In turn, an engaging and meaningful environment for learning can be sustained.

So what now?

If you are an educator, it wouldn't hurt to see what new educational applications have arrived at your school. Volunteer to be the teacher who teaches with the new interactive whiteboard. Sign up for the Wi-Fi laptop cart once a week for a semester. Hunt down the digital video cameras and do a search for where the video editing software might be hiding. Don't let the tech-savvy teacher down the hall hog it all, even if you consider yourself to be a technophobe!

Most importantly, establish a relationship with the technology "go-to" person at your school or university department, and see if it is not too late to order a few new technology tools. Find a few other people who have decided to do more with technology this year. Then sign up for a few workshops before your calendar is filled. There are no guarantees, but you just might have the best school year ever.

If you are new to this blog, do a search for what might interest you. I am sure you will find links to information that will help. Be sure to visit C.C. Long's Tech and Integration blog for specific technology related activities you can implement right away.

For reflection, take the time to watch the video clips on C.C. Long's blog that were used during Thinkfinity training.

For more inspiration, you might enjoy following a few of the links below:

Visual Literacy and Multimedia Literacy Quotes - Odds and Ends PART TWO

Engaged Learning and Social Physics: Phun, an Interactive 2D Physics Sandbox

Updated MegaPost - Resources For All: Interactive Multimedia and Universal Design for Learning

I learned about the above video today from "Back-to School Tech Ideas for K-5", written by C.C. Long on her Tech Integration in Schools blog, and I thought it was worth sharing. The video was created by several college students in 2004, and can be found on TeacherTube. It is similar in spirit to the videos I included on a post about engaged learning earlier this year.

Here are a few quotes from the students in video:

Here are a few quotes from the students in video:

"We are more visual learners, we use different technologies to express ourselves, we don't use just pen and paper".

"99 % of the teachers do it the old fashioned way of ...sit down, you listen to someone lecture for 40-50 minutes...

"Just lectures, it limits my learning."

"There are several options in expressing yourself and expressing your viewpoints. And I think the university limits that."

"I was given one way, and that was how I had to do it."

"The professor still wants to teach it the same way they learned.."

"I figured I'd have to to write papers, I figured I'd have to do problem sets, but I thought there would be more options."

"It is frustrating, because it doesn't seems that college is accommodating the visual learner"

"Anywhere you go outside of the classroom, the technology is being used. I don't understand why we aren't applying it to class."

"Listen. Sit down and talk with me. Give me a choice to express myself in their class."

"I think it would make it more exciting for them."

"99 % of the teachers do it the old fashioned way of ...sit down, you listen to someone lecture for 40-50 minutes...

"Just lectures, it limits my learning."

"There are several options in expressing yourself and expressing your viewpoints. And I think the university limits that."

"I was given one way, and that was how I had to do it."

"The professor still wants to teach it the same way they learned.."

"I figured I'd have to to write papers, I figured I'd have to do problem sets, but I thought there would be more options."

"It is frustrating, because it doesn't seems that college is accommodating the visual learner"

"Anywhere you go outside of the classroom, the technology is being used. I don't understand why we aren't applying it to class."

"Listen. Sit down and talk with me. Give me a choice to express myself in their class."

"I think it would make it more exciting for them."

"When I become a teacher, I am going to have to learn and assess that students are going to have even more that is accessible to them. And if I don't adapt to that, I'm going to start to lose my students."

My hunch is that many educators still do not feel comfortable keeping up with world of the tech-savvy. To do so takes quite a bit of effort, time, and determination. And frustration. If you've worked in public schools for a while, you know what I mean. Much of the technology that educators have been handed over the years has been teacher-unfriendly.

.

Things are changing.

It has been six years since CAST and the Association for Supervision and Curriculum Development created an on-line "how-to" book about technology and the concept of Universal Design for Learning (UDL). This online multimedia book, Teaching Every Student in the Digital Age, still provides a good foundation for teachers who plan to integrate technology into teaching and learning activities to support all learners.

It is exciting to know that many school districts have initiated study groups provide on-line resources for their teachers to support the implementation of UDL. . At the university level, the concept of using technology to support universal design for instruction is not as alien as it might have been 10 years ago.

Mindful, reflective use of technology, including interactive multimedia technology, can support multiple means of learning, communication, collaboration, and knowledge sharing among among all learners, no matter what age. In turn, an engaging and meaningful environment for learning can be sustained.

So what now?

If you are an educator, it wouldn't hurt to see what new educational applications have arrived at your school. Volunteer to be the teacher who teaches with the new interactive whiteboard. Sign up for the Wi-Fi laptop cart once a week for a semester. Hunt down the digital video cameras and do a search for where the video editing software might be hiding. Don't let the tech-savvy teacher down the hall hog it all, even if you consider yourself to be a technophobe!

Most importantly, establish a relationship with the technology "go-to" person at your school or university department, and see if it is not too late to order a few new technology tools. Find a few other people who have decided to do more with technology this year. Then sign up for a few workshops before your calendar is filled. There are no guarantees, but you just might have the best school year ever.

If you are new to this blog, do a search for what might interest you. I am sure you will find links to information that will help. Be sure to visit C.C. Long's Tech and Integration blog for specific technology related activities you can implement right away.

For reflection, take the time to watch the video clips on C.C. Long's blog that were used during Thinkfinity training.

For more inspiration, you might enjoy following a few of the links below:

Engaged Learning Revisited: Four videoclips for reflection....

Response to Intervention, Universal Design for Learning: Resources for ImplementationVisual Literacy and Multimedia Literacy Quotes - Odds and Ends PART TWO

Engaged Learning and Social Physics: Phun, an Interactive 2D Physics Sandbox

Updated MegaPost - Resources For All: Interactive Multimedia and Universal Design for Learning

Posted by

Lynn Marentette

Natural User Interface new website shares information about the company's innovative multi-touch solutions...

Frequent visitors to this blog know that I've been following the development of the NUI multi-touch system since it was in the gestational stage, a university project of Harry van der Veen. I'd like to mention that as the NUI multi-touch table has evolved, so has the NUI website.

Take a look at the user-friendly, visually appealing website that showcases the Natural User Interface company's accomplishments, new products, and services. Here is some information from the site:

- "Natural User Interface (NUI) is an innovative emerging technology company specializing in advanced multi-touch software and service solutions. NUI's solutions can convert an ordinary surface into an interactive, appealing and intelligent display that creates a stunning user experience."

- "NUI provides both standardized as well as customized horizontal, vertical and angled multi-touch hardware solutions. With the wide variety of market leading suppliers and partner, NUI gives warranty on all our products."

For those of you who can't wait to dive into programming multi-touch, collaborative applications, take a look at NUI's Snowflake 1.0 software for OEM partners:

- "NUI Suite 1.0 Snowflake is an easy to use, robust, fast and reliable gesture recognition, computer vision, image processing, motion sensing multi-touch software package.It has been tested and developed for over 1,5 year and valued as best in the industry by our global hardware partners."

Posted by

Lynn Marentette

Aug 20, 2008

The Hidden Geometry of Hair: Computer Generated "Hollywood Hair" Unveiled at SIGGRAPH 2008

I came across a link to an article in the UCSD School of Engineering News today and just had to post it.

"HOLLYWOOD HAIR IS CAPTURED AT LAST: DETAILS IN SIGGRAPH 2008 PAPER"

Technology now exists that will enable animators, video-game makers, and film-makers the opportunity to capture images of real hairstyles that look and behave realistically. Below is a series of side-by-side comparisons of computer generated heads of hair and the real thing.

From the article:

From the article: "By determining the orientations of individual hairs, the researchers can realistically estimate how the hairstyle will shine no matter what angle the light is coming from. “You can’t just blend the highlights from two different angles to get a realistic highlight for a point in between,” said Chang.

“Instead of blending existing highlights, we create new ones.”

....One possible extension of this work: making an animated character’s hair realistically blow in the wind. This is possible because the researchers also developed a way to calculate what individual hair fibers are doing between the hairstyle surface and the scalp. They call this finding the “hidden geometry” of hair.

“Our method produces strands attached to the scalp that enable animation. In contrast, existing approaches retrieve only the visible hair layer,” the authors write in their SIGGRAPH 2008 paper. An animation of a hairstyle is available as a download from the “hair photobooth” Web site created by Sylvain Paris: http://people.csail.mit.edu/sparis/publi/2008/siggraphHair/

What we need is a real-life hair photobooth. Have a bad hair day? No problem. Walk in, "zap!", and walk out with real Hollywood hair. Maybe the hair scientists are working on it....

SIGGRAPH 2008 Paper citation: “Hair Photobooth: Geometric and Photometric Acquisition of Real Hairstyles,” by Sylvain Paris and Wojciech Matusik from Adobe Systems, Inc., Will Chang, Wojciech Jarosz and Matthias Zwicker from University of California, San Diego; and Oleg I. Kozhushnyan and Frédo Durand from Massachusetts Institute of Technology

Posted by

Lynn Marentette

Aug 18, 2008

Digital Storytelling, Multimodal Writing, Multiliteracies...

Digital storytelling, multimodal writing, and multiliteracies are overlapping concepts that weren't around during my first round as a university student. As more people of all ages create and share digital content on the web in new and imaginative ways, teachers and university scholars have taken notice. Is there a consensus that the printed word, as we've known it, is in the middle of a digital transformation?

Let's start out with digital storytelling.

By now, everyone knows about YouTube and vlogs as new means of communication. There is more to digital storytelling than uploading a few hastily put-together video clips from the family camcorder, or slapping together a PowerPoint presentation with a few bells and whistles. There are now some standards. Digital storytelling is an art.

The following definition is from an article from EduCause, 7 things you should know about Digital Storytelling.:

Petter Kittle, from the Northern California Writing Project, Summer Institute 2008, touches on the topic of multimodal writing in Multimodal Texts: Composing Digital Documents. Related to this is the concept of digital writing.

"Multiliteracies is an approach to literacy which focuses on variations in language use according to different social and cultural situations, and the intrinsic multimodality of communications, particularly in the context of today's new media."

The Center for Digital Storytelling

Multimedia Storytelling

What are multimodality, multisemiotics, and multiliteracies?

(Ben Williamson, Futurelab)

Reading Images: Multimodality, Representation, and New Media

(Gunther Kress)

New Learning: Elements of a Science of Education

(Mary Kalantzis & Bill Cope)

Multiliteracies

The Multiliteracy Project

Multimodal Writing

http://multimodalwriting.com/

(new website, under development)

Multimedia Blogging

(a post from 2004, worth reading for historical context)

Thinking about multimodal assessment

(Digital Writing, Digital Teaching)

Standards related to digital writing

(from Teaching Writing Using Blogs, Wikis...)

I conclude this text-based post with a promise to incorporate more multimedia experiences in my upcoming posts....stay tuned.

Let's start out with digital storytelling.

By now, everyone knows about YouTube and vlogs as new means of communication. There is more to digital storytelling than uploading a few hastily put-together video clips from the family camcorder, or slapping together a PowerPoint presentation with a few bells and whistles. There are now some standards. Digital storytelling is an art.

The following definition is from an article from EduCause, 7 things you should know about Digital Storytelling.:

- "Digital storytelling is the practice of combining narrative with digital content, including images, sound, and video, to create a short movie, typically with a strong emotional component. Sophisticated digital stories can be interactive movies that include highly produced audio and visual effects, but a set of slides with corresponding narration or music constitutes a basic digital story. Digital stories can be instructional, persuasive, historical, or reflective. The resources available to incorporate into a digital story are virtually limitless, giving the storyteller enormous creative latitude. Some learning theorists believe that as a pedagogical technique, storytelling can be effectively applied to nearly any subject. Constructing a narrative and communicating it effectively require the storyteller to think carefully about the topic and consider the audience’s perspective."

Petter Kittle, from the Northern California Writing Project, Summer Institute 2008, touches on the topic of multimodal writing in Multimodal Texts: Composing Digital Documents. Related to this is the concept of digital writing.

"Multiliteracies is an approach to literacy which focuses on variations in language use according to different social and cultural situations, and the intrinsic multimodality of communications, particularly in the context of today's new media."

- "...it is no longer enough for literacy teaching to focus solely on the rules of standard forms of the national language. Rather, the business of communication and representation of meaning today increasingly requires that learners are able figure out differences in patterns of meaning from one context to another. These differences are the consequence of any number of factors, including culture, gender, life experience, subject matter, social or subject domain and the like. Every meaning exchange is cross-cultural to a certain degree." -from Kalantzis and Cope's Multiliteracies website

The Center for Digital Storytelling

Multimedia Storytelling

What are multimodality, multisemiotics, and multiliteracies?

(Ben Williamson, Futurelab)

Reading Images: Multimodality, Representation, and New Media

(Gunther Kress)

New Learning: Elements of a Science of Education

(Mary Kalantzis & Bill Cope)

Multiliteracies

The Multiliteracy Project

Multimodal Writing

http://multimodalwriting.com/

(new website, under development)

Multimedia Blogging

(a post from 2004, worth reading for historical context)

Thinking about multimodal assessment

(Digital Writing, Digital Teaching)

Standards related to digital writing

(from Teaching Writing Using Blogs, Wikis...)

I conclude this text-based post with a promise to incorporate more multimedia experiences in my upcoming posts....stay tuned.

Posted by

Lynn Marentette

Aug 16, 2008

MellaniuM: Virtual Reality, History, and Digital Heritage

Joe Rigby, from MellaniuM, focuses on the use of interactive virtual reality technology to create environments that support the learning of history. This concept is also known as "Digital Heritage". Applications are in the works that combine high-polygon modeling with scaled photo-realistic textures, incorporating multi-user avatar interaction within 3D archaeological visualizations.

MellaniuM will be presenting at VSMM' 08: Conference on Virtual Systems and Multimedia Dedicated to Digital Heritage. Instead of a PowerPoint presentation, participants will be provided with a walk-through of the Theatre of Pompeii.

A future workshop, sponsored by ADSIP, the Applied Digital Signal and Image Processing Research Centre, will feature an EONREALITY multi-wall immersive system to display the latest version of the Theatre of Pompeii district. Public VR and Anne Weis ,from the Department of Art History at the University of Pittsburgh, are collaborating on this project.

The original Pompeii Project, was built at Carnegie Mellon's Studio for Creative Inquiry, during the mid-1990's. Links to the Virtual Theater District (VMRL) and 3D models can be found at http://artscool.cfa.cmu.edu:16080/~hemef/pompeii/project.html

If you have an interactive whiteboard, download the 3D models of Pompeii. You'll have to install a free VRML plug-in in order to view them on a web browser.

Wouldn't it be great if all students could learn about history via interactive virtual reality someday?

Microsoft Surface's Hotel Concierge Application: Let's see an affordable Surface, deployed in classrooms and libraries!

Microsoft Surface at the Sheraton Hotel:

Think how this could play out in classrooms, libraries, information centers, and other public spaces.

Need I say more?

(Cross posted on TechPsych and Technology-Supported Human-World Interaction blogs)

Think how this could play out in classrooms, libraries, information centers, and other public spaces.

Need I say more?

(Cross posted on TechPsych and Technology-Supported Human-World Interaction blogs)

Posted by

Lynn Marentette

Microsoft Research project: MouseMischief - Multi-user, Multi-Mice Interaction on Large Displays

This is an interesting demonstration of the use of multiple mice, controlled by children on an interactive whiteboard. The collaborative application uses Microsoft's Multi-Point technology. For more information and free downloads, go to MouseMischief.org.

Posted by

Lynn Marentette

Aug 9, 2008

The Internet of Surfaces? Microsoft's Pete Thompson discusses screens and surfaces of all sizes.

I came across this video of Microsoft Surface's general manager, Pete Thompson, on the GottaBeMobile website. According to Thompson, the people who worked with TouchWall discussed in the video, were also involved with Microsoft Surface.

Apparently, the Surface and Wall folks at Microsoft aren't sure of what they are doing with screens of all sizes.

(If you are interested in surface form factors, see my previous blog post, Emerging Interactive Technologies, Emerging Interactions, and Emerging Integrated Form Factors.)

There are many unanswered questions from my perspective.

Bill Gates portends that every surface will be a computer, a concept that is echoed in the video. If so, what are people doing to ensure that surface-supporting environments are universally designed?

Before Microsoft and other companies unleash "surface" technology to the masses, they must get a few things right.

Will they?

Will the researchers at Microsoft find out how various screens play out in classrooms, in the community settings, on-the job, and in-between?

For example, large interactive displays in urban and retail settings have the potential to provde people with a rich amount of information about what is around them. These displays serve little purpose if they are user-unfriendly, and no purpose at all if they are not accessible.

If developers and designers are not following basic user-centered design guidelines and usability standards now, how can we expect the nextgen systems of display surfaces to support universal usability? As our population ages, this will be more of a problem.

From what I can tell, there will be more opportunities for people to use their mobile devices to interact with larger screens and surfaces when they are out and about. For example, when I was at the airport recently, I noticed that there was a large display that offered cell-phone ringtone downloads. Microsoft was behind this display.

Interconnectivity and interoperability between devices and screens of all sizes is important to think about. If universal usability guidelines are not followed, our mobile devices will be difficult to use in the world of surfaces.

It isn't much of a leap to see the big picture. Just think about the problems we have with our remote controls and entertainment centers in our homes! We might have to carry all sorts of devices just to get from point A to point B. I'm not kidding. It will not be a pretty sight, especially if the privacy and security issues are not resolved as we move to a world that supports the internet of things.

Or shall I say, the internet of surfaces?

Posted by

Lynn Marentette

Creative Programming: openFrameworks - AWESOME for interactive multimedia applications!

openFrameworks: Better Tools, Enhanced Creativity, Better Projects: YES. Artists can make tools at the same time they make artwork.

To learn all about this, delve into the video. It highlights interviews with creative people who are using openFrameworks, including their innovative work.

made with openFrameworks from openFrameworks on Vimeo.

If you are working with openFrameworks, or thinking about it, let me know.

This looks like a great tool to use for projects I'm creating for my new HP TouchSmart....

.....and my multi-touch thought experiments ; }

I learned about openFrameworks from Seth Sandler, aka "cerupcat", a member of NUI-group who was chosen to participate in Google's Summer of Code. He's posted about his progress on his AudioTouch blog.

Here is a screenshot of Seth's tracking application, still under development, is the result of porting touchlib, the main tracker used by NUI-Group members, to openFrameworks:

To learn all about this, delve into the video. It highlights interviews with creative people who are using openFrameworks, including their innovative work.

made with openFrameworks from openFrameworks on Vimeo.

If you are working with openFrameworks, or thinking about it, let me know.

This looks like a great tool to use for projects I'm creating for my new HP TouchSmart....

.....and my multi-touch thought experiments ; }

I learned about openFrameworks from Seth Sandler, aka "cerupcat", a member of NUI-group who was chosen to participate in Google's Summer of Code. He's posted about his progress on his AudioTouch blog.

Here is a screenshot of Seth's tracking application, still under development, is the result of porting touchlib, the main tracker used by NUI-Group members, to openFrameworks:

Posted by

Lynn Marentette

Aug 6, 2008

Video modeling software for visual learners and those who have autism spectrum disorders

Activity Trainer, video modeling software from Accelerated Educational Software, supports the following activities:

A free 30-day trial of the software can be downloaded from the Accelerations Educational Software website.

On the Accelerations Educational Software website, you can find other products, such as Storymovies, which is the product of a collaboration between Carol Gray (social stories), Mark Shelley, and the Special Minds Foundation.

- Academic

- Communication

- Daily Living

- Non-Verbal Imitation

- Recreation

- Social

- Vocational

A free 30-day trial of the software can be downloaded from the Accelerations Educational Software website.

On the Accelerations Educational Software website, you can find other products, such as Storymovies, which is the product of a collaboration between Carol Gray (social stories), Mark Shelley, and the Special Minds Foundation.

Posted by

Lynn Marentette

"The Next Big Thing in Humanities, Arts, and Social Science Computing: Cultural Analytics": Link

I could remain an undeclared graduate student for life!

Last semester, I took a course in information visualization and visual communication. What next?!

Cultural Analytics.

I just heard of this area of study today. Here is a link to an article, written by Kevin D. Franklin and Karen Rodriguez, in HPCwire:

The Next Big Thing in Humanities, Arts, and Social Science Computing: Cultural Analytics

"some see the reciprocal and perhaps limitless possibilities of emergent technologies and humanities scholarship -- how digital technology cuts across disciplines, creates new ways of looking at artifacts, as well as producing new forms itself."

This speaks to my multidisciplinary soul.

-photo via HPCwire

Last semester, I took a course in information visualization and visual communication. What next?!

Cultural Analytics.

I just heard of this area of study today. Here is a link to an article, written by Kevin D. Franklin and Karen Rodriguez, in HPCwire:

The Next Big Thing in Humanities, Arts, and Social Science Computing: Cultural Analytics

"some see the reciprocal and perhaps limitless possibilities of emergent technologies and humanities scholarship -- how digital technology cuts across disciplines, creates new ways of looking at artifacts, as well as producing new forms itself."

This speaks to my multidisciplinary soul.

-photo via HPCwire

Posted by

Lynn Marentette

Aug 5, 2008

Mozilla's Concept Series: A call for collaborative participation. Demos look like they would work well on a touch screen...

Mozilla labs has a call for participation in the development of nextgen web interaction design. "Be bold. Be radical. The crazier, the better. Let’s explore the future together."

-Mozilla Labs

The following videos give a good overview of the innovations initially created for this new endeavor:

Aurora, created by Adaptive Path, is an adaptive browser interaction concept, incorporating radial and wheel menus, data visualization objects, a 3D navigation system, and more.

Aurora (Part 1) from Adaptive Path on Vimeo.

Bookmarking and History Concept Video from Aza Raskin on Vimeo.

Firefox Mobile Concept Video from Aza Raskin on Vimeo.

Excerpt about this collaborative project, from the Mozilla Labs website:

"Today we’re calling on industry, higher education and people from around the world to get involved and share their ideas and expertise as we collectively explore and design future directions for the Web.

You don’t have to be a software engineer to get involved, and you don’t have to program. Everyone is welcome to participate. We’re particularly interested in engaging with designers who have not typically been involved with open source projects. And we’re biasing towards broad participation, not finished implementations.

We’re hoping to lower the barrier to participation by providing a forum for surfacing, sharing, and collaborating on new ideas and concepts. Our goal is to bring even more people to the table and provoke thought, facilitate discussion, and inspire future design directions for Firefox, the Mozilla project, and the Web as a whole."

-via Putting People First

This will be an interesting trend to follow. I'd like to work on web navigation systems optimized for multi-touch and large screen displays. Is it now possible?

-Mozilla Labs

The following videos give a good overview of the innovations initially created for this new endeavor:

Aurora, created by Adaptive Path, is an adaptive browser interaction concept, incorporating radial and wheel menus, data visualization objects, a 3D navigation system, and more.

Aurora (Part 1) from Adaptive Path on Vimeo.

Bookmarking and History Concept Video from Aza Raskin on Vimeo.

Firefox Mobile Concept Video from Aza Raskin on Vimeo.

Excerpt about this collaborative project, from the Mozilla Labs website:

"Today we’re calling on industry, higher education and people from around the world to get involved and share their ideas and expertise as we collectively explore and design future directions for the Web.

You don’t have to be a software engineer to get involved, and you don’t have to program. Everyone is welcome to participate. We’re particularly interested in engaging with designers who have not typically been involved with open source projects. And we’re biasing towards broad participation, not finished implementations.

We’re hoping to lower the barrier to participation by providing a forum for surfacing, sharing, and collaborating on new ideas and concepts. Our goal is to bring even more people to the table and provoke thought, facilitate discussion, and inspire future design directions for Firefox, the Mozilla project, and the Web as a whole."

-via Putting People First

This will be an interesting trend to follow. I'd like to work on web navigation systems optimized for multi-touch and large screen displays. Is it now possible?

Posted by

Lynn Marentette

Aug 3, 2008

iPhone: Multitouch control of a laptop screen!

The Media Computing Group at RWTH-AACHEN University has developed an application to allow users to control a laptop via a multi-touch iPhone. Although the demo shows how to rotate and resize images, the "multiple views" section is interesting. Like an Etch-a-Sketch, you shake the iPhone to reset the images.

I've been toying with ideas about ways to create user-friendly interactions between screens of all sizes. This approach intrigues me.

You can find more information about the iPhone and Cocoa on the Multi-touch Framework website. The contact for this project is Stefan Hafeneger, a student assistant

I've been toying with ideas about ways to create user-friendly interactions between screens of all sizes. This approach intrigues me.

You can find more information about the iPhone and Cocoa on the Multi-touch Framework website. The contact for this project is Stefan Hafeneger, a student assistant

Posted by

Lynn Marentette

Subscribe to:

Posts (Atom)